My previous post gave a high-level overview of one approach to web application development and testing and the new challenges that came with data engineering. This post describes how new testing solutions evolved to handle these new data engineering challenges.

Background

The ETL tool I’m building forks off of

odo, an open-source project for the Blaze

ecosystem.

The one really good thing about odo is how easy it is in order to add in

different data formats to transform to. There are four primary methods you need

to implement to support a new backend:

-

odo.resource: Match a regular expression to a Python object describing your data format in the context of theodograph, and return an instance of that object. For example, the regular expression*.parquetmay match against the proxy classParquet, andParquet()with attributes is returned. -

odo.discover: Generate a schema of the data given a resource defined byodo.resourceand return it to the user. This method parses the data as described by your Python backend library and returns a schema generated by the third-party Python librarydatashape, a data description protocol also developed by Blaze. -

odo.convert: Converts a resource returned fromodo.resourceto a resource type different from that of the original resource as defined byodo.resource. This method traverses an instance ofnetworkx.DiGraph(), dynamically generated during the callimport odo. -

odo.append: Appends a resource returned fromodo.resourceto a resource of a different resource type.

And that’s it! Your backend is now integrated into your copy of odo and ready

for use.

Furthermore, odo.resource and odo.discover are functional already; the

same inputs will always generate the same outputs. Suffice it to say that these

methods aren’t the testing bottleneck. odo.convert and odo.append are each

about as functional as you can expect; the same outputs will cause the same

errors, as long as the resources behave in a predictable manner. Additionally,

odo.convert calls odo.resource to create an empty instance of the

destination format given and then calls odo.append to append to the empty

resource — a wrapper method. Really, you only have to worry about one method:

odo.append().

So what’s not so great about odo?

First, it’s no longer actively maintained. According to this

issue, odo is deprecated and users

are encouraged to use intake

instead. So for odo users, if you want to merge functionality somebody else

made, there is no central place to do so because everyone has their own forks,

because nobody is assigned the maintainer role. It’s not a huge problem as it

still is very easy to add functionality to odo, but it would have been really

nice in order to consolidate some of the effort into rebuilding critical paths

for open-source formats like Apache ORC or Apache Parquet.

Second, there is no real test suite that comes bundled in with odo. This

matters because as a fork, I expect to be able to use the functionality of my

base right out of the box, and as we know from earlier, code without tests is

broken code. In production, many pieces of odo lacked tests for numerous edge

cases, which slowed down development significantly.

Third, the code is somewhat dirty and the core is not well documented. This wasn’t a huge issue in the beginning because the API meets expectations in functionality and performance, but it makes productionizing the code or iterating on the design abstractions difficult, both of which eventually became issues. For example:

-

Registering a new backend with the rest of the

odograph, versus just the intermediary format, to fully utilize the power ofodorequires implementing a catchall decorator:odo.convert.register($FORMAT, $EXISTING_FORMAT) def convert_existing_format_to_format(instance_of_existing_format): # code return instance_of_format # To use all other existing formats beyond $EXISTING_FORMAT odo.convert.register($FORMAT, object) def convert_object_to_format(object): return odo.convert( $FORMAT odo.convert( $EXISTING_FORMAT, object ) )Either that or implement each of the other resource conversions manually :frowning: This is much better done in a try/catch block in the core code, IMHO, but it’s unclear where this would be added.

-

Sometimes, I had to play a game of whack-a-mole with

odo’s design abstractions, where poor understanding of the design meant one problem led to a design decision that led to another problem. For example, sinceodois a data transformation tool, and not a data transfer tool, it NO-OPs when the destination format and the source format are the same (because why would you transform to the same format?). I patched this early on by transforming to an “intermediate” format that nobody should want to use in the final tool. The problem then becomes you make multipleodocalls, and some behaviors ofodo(such asodo.Temp(), a temporary file memoizer that deletes files after theodocall finishes) don’t work the way you want them to (in the case ofodo.Temp(), deleting the file after the intermediate format is generated). -

Python’s selling point is not performance. Performance with reasonable system constraints is a thing to worry about when trying to transfer terabytes of data, quickly, without crashing the actual database you want to transfer to with unacceptable resource utilization. On top of that, many libraries, such as those of Apache ORC, are written in C++ and Java, and I need to invoke connections between Python and other languages in order to use said libraries. This led to situations like the ordinal mismatches that may lead to data corruption, as well as issues with logging (for example, Java’s log4j does not tie in well with Python’s [

logging] module).

Fourth, the API is a bit loose. Features developed for one resource have to be

re-implemented manually for all resources. For example, if you want to implement

a feature to set a batch size, to read a set number of records from one location

to another at a time, you have to implement the “how” to fetch for every

specific resource. odo’s forgiving nature also means that if a feature isn’t

implemented, the application does not fail. What ends up happening is continued

development results in a mosaic of different features, with the same feature

potentially having different behaviors depending on the resource at a specific

release. While this doesn’t crash applications and lends itself to patching

bugs, it isn’t good for predictable, user-friendly behavior. This can be

mitigated by implementing custom decorators, such as odo.batch(), which you

can set to hard fail by default.

Starting Off

I can empathize with the original developers why there aren’t any tests. odo

comes with a massive suite of functionality built in, which may have been built

ahead of any tests and focused solely on functionality for the project to

survive. It’s just that it’d be difficult, if not impossible, to test all the

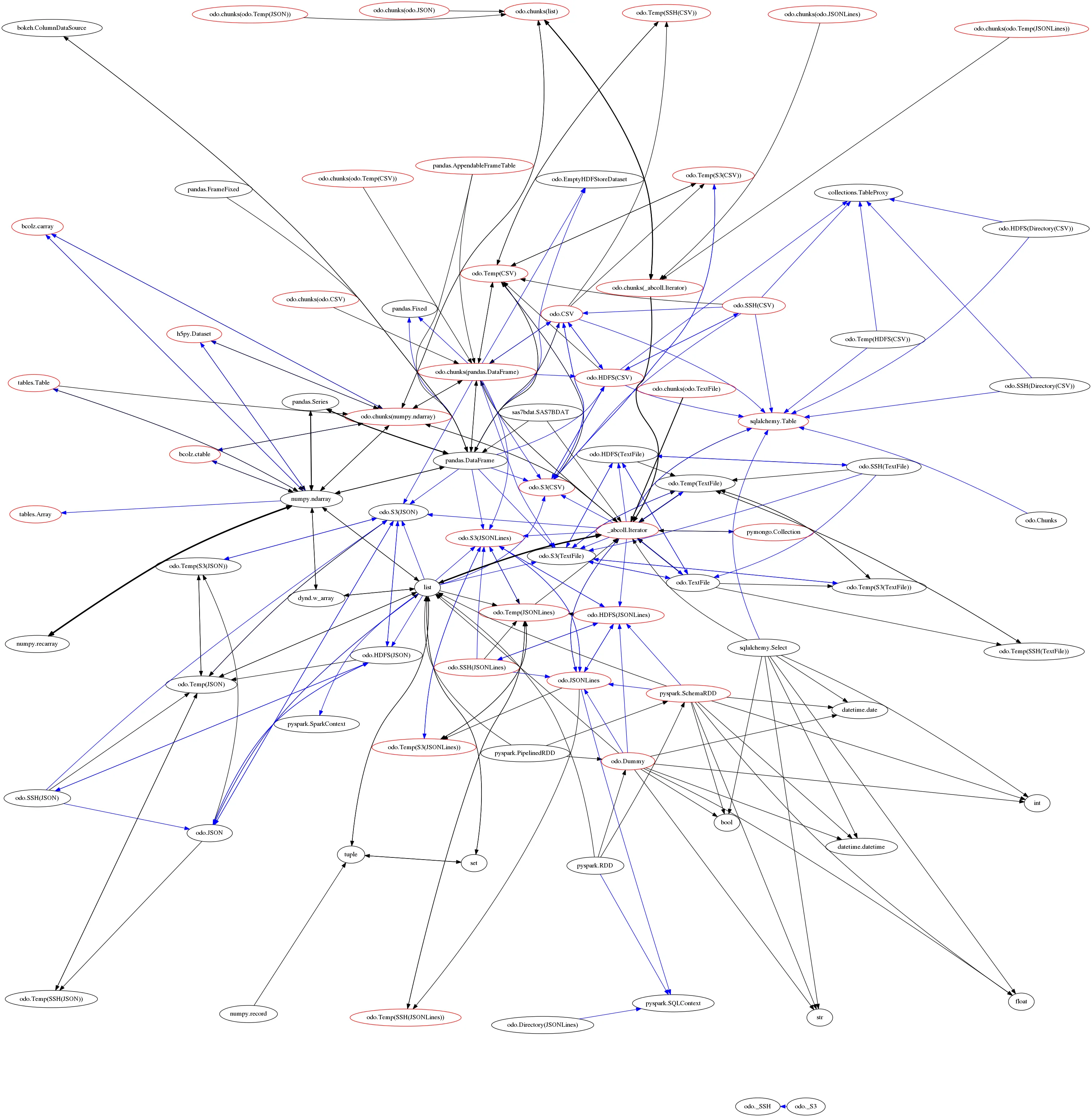

permutations at the end. Here’s a visualization of the different types of data

transfers possible:

Yeah. At this point, I didn’t know what I didn’t know, but I did know that I didn’t know a lot.

I needed to start somewhere, and I thought I couldn’t go wrong with approaching

the problem through a functional lens, so I decided to divide up the problem

based on types. I created a number of tests for every type and dependency, and I

stuffed them into a harness structure that I thought intuitively made sense.

This is available below as Appendix A. pytest loved this format because

each test was an individual test function, and pytest could hook onto these and

print very nice reports. An example test report is available as Appendix D.

Problems began almost immediately:

-

The data was tightly coupled to the code; I would create a pandas dataframe with some small amount of test data, and the creation process would happen in the Python file. Data migrations are hard enough with data alone, but at least that is scriptable. When mixed with code, data can’t really be migrated without manual input (or a lot of effort in scripting).

-

The code was also almost exactly the same regardless of the input data, resulting in minimal code reuse and heavy code duplication. Code duplication —> technical debt.

-

It couldn’t easily take into account any new dimensions of the problem I would face in the future, the so-called unknown unknowns. If there was a new dimension of the problem (e.g. for every type and backend, we need to test the type at a particular column index), I would have to manually write tests on top of every existing dimension of the problem in order to address this new dimension. I would have to spend actual time and energy coming up with how to handle these cases, and that wasn’t acceptable given the limited developer resources we had.

Then the VP of engineering came over, took one look at the tests I had been writing, and mentioned that I wasn’t taking into account other behaviors common to databases, such as primary/shard keys and subsequent record deduplication. Boom new dimension, and unhandled problem. He recommended I come up with a new design for this test harness because migrating the old one again and again would be meaningless and time consuming. I hated to admit it at the time, but he was right. This effort was unsustainable.

First Iteration: Describing tests as data

Several requirements went into choosing what type of data format I wanted to store my tests as:

-

I wanted to isolate the data away from the code, because I could add data (and hence test cases) much more easily.

-

I also knew that there were certain “hooks” in my test execution lifecycle that my code would be looking for (e.g. checking for a particular type of assertion).

-

The format had to be fairly universally recognized by programming languages and frameworks, human-parseable, and well understood.

-

Since the tests are not part of the critical execution path of the application in production, and because regression tests are slow due to communications through third-party APIs (and resultant data transmission through network and to disk), high performance was not a first-class priority.

-

I had to take care of the unknown unknowns, and it had to be easy to not only update given test cases (manually and programatically), but also version test cases if there was a breaking change (e.g. I left the project and somebody wanted to reuse a particular key).

All this lent itself to choosing a semi-structured data format, which in my case

became json.

One thing I did not know how to do in pytest at the time was how to isolate

each test by itself. I could only execute all the tests at once as a single

test — which then printed success. The only workaround I had was to log the

output as best as I can, and then manually parse those logs by eye whenever I

executed the tests. I figured this would be a good way in order to practice

writing tests as data, whether or not it worked.

This is of course an unsustainable solution; no person, much less a machine, can parse a stream of logs in order to generate test results, and creating a failure-resistant logging system is its own Herculean task.

I only got a few test cases written (less than ten) before I went stir-crazy and looking for a solution to this mission-critical problem.

This harness structure is available at Appendix B. An example test report is available as Appendix E. I would advise anybody reading to not use a solution like this.

Second Iteration: Disambiguating, Isolating, and Discretizing Tests

I had listened to the QA team to see whether they had any insights into working

with pytest. As luck would have it, one QA engineer found a way to use

pytest.mark.parametrize() with

metaprogramming.

The essence is you create a stub function, into which you pass in some amount of

data processed by a special function pytest_generate_tests(). I had not known

that you could use the parametrize method in that manner. I did use the method

in order to stand up individual resources for each regression in my first

generation harness, using

indirect=True

and passing parameters unique to each test to a pytest fixture.

This solution effectively addressed the concern of running tests individually, and was the cornerstone behind the iterative efforts of establishing data-driven testing.

One other very nice aspect of pytest_generate_tests() is the ability to pass

in CLI flags into the execution environment. It’s just argparse underneath.

For example, my manager mentioned how having a timestamp as a unique identifier

for a given test case would effectively distinguish test cases from each other

with little mental overhead and hassle, better than my idea of having unique

test case descriptions (as long form text). So in order to run a particular

test, you could execute:

username@hostid:/path/to/kio$ pytest \

-q kio/tests/regression \

--test-case-id $TIMESTAMPAnd it will filter all test cases to retrieve the unique test case as identified

by timestamp. In addition, flags exist to filter by tags (e.g. do not run tests

tagged with 'aws' if you have an air-gapped environment and want to verify

functionality in production), by transfer type, and others.

I’ve been using this system for about three or four months, and I am very happy with the ability with the amount of code reuse and code deduplication. The major code commitment in the test harness is the comparison logic between any two resources, which is unavoidable. I believe Paul Graham said you picked the right design abstraction if you’re doing new things all the time, and this comes closer than anything else I used. The language-agnostic nature of data means that different services have a single communications medium; for example, a fuzzer or a property-based tester like hypothesis could generate JSON test cases with the same schema and different arguments as a form of automated test-driven development, to increase productivity and maximize developer impact.

This harness structure is available as Appendix C. An example test report is available as Appendix F.

Lessons Learned

Ultimately, ETL boils down to two things: a robust, complete, extensible, and easily mappable type system , and everything else. Ensure that all data formats can map directly or indirectly to and from your type system, and you have a functional ETL pipeline. For example, it doesn’t matter if your type system doesn’t have WKT support, if you can stringify your WKT objects and recognize/parse them at the very end. The problem is that system needs to handle every kind of bad data allowed to exist by your choice of programming language, and there’s many points where that can go wrong (and where some, like those resulting from closed-source software, are out of your power to control). The happy path is very short, sweet, and happy, and the edge paths are dark and full of terrors.

Appendices

Appendix A: 1st generation harness directory structure

regression

├── __init__.py

├── kinetica_to_csv

│ ├── __init__.py

│ ├── test_double.py

│ ├── test_float.py

│ ├── test_int.py

│ ├── test_long.py

│ └── test_str.py

├── kinetica_to_kinetica

│ ├── __init__.py

│ ├── test_double.py

│ ├── test_float.py

│ ├── test_int.py

│ ├── test_long.py

│ └── test_str.py

├── kinetica_to_parquet

│ ├── __init__.py

│ ├── test_char128.py

│ ├── test_char16.py

│ ├── test_char1.py

│ ├── test_char256.py

│ ├── test_char2.py

│ ├── test_char32.py

│ ├── test_char4.py

│ ├── test_char64.py

│ ├── test_char8.py

│ ├── test_float.py

│ └── test_int.py

├── kinetica_to_postgresql

│ ├── __init__.py

│ ├── test_char128.py

│ ├── test_char16.py

│ ├── test_char1.py

│ ├── test_char256.py

│ ├── test_char2.py

│ ├── test_char32.py

│ ├── test_char4.py

│ ├── test_char64.py

│ ├── test_char8.py

│ ├── test_date.py

│ ├── test_datetime.py

│ ├── test_int16.py

│ ├── test_int8.py

│ ├── test_int.py

│ ├── test_misc.py

│ ├── test_str.py

│ └── test_time.py

└── postgresql_to_kinetica

├── __init__.py

├── test_char128.py

├── test_char16.py

├── test_char1.py

├── test_char256.py

├── test_char2.py

├── test_char32.py

├── test_char4.py

├── test_char64.py

├── test_char8.py

└── test_int.py

common

├── fixtures.py

├── __init__.py

├── utils_kinetica_csv.py

├── utils_kinetica_kinetica.py

├── utils_kinetica_parquet.py

├── utils_kinetica_postgres.py

└── utils.pyAppendix B: 2nd generation harness directory structure

regression_new

├── common

│ ├── __init__.py

│ └── utils.py

├── _data

│ ├── csv

│ │ ├── 1.csv

│ │ └── __init__.py

│ └── __init__.py

├── __init__.py

└── kinetica_to_aws

├── __init__.py

├── kinetica_to_aws_s3.metadata.json

└── test_kinetica_to_aws_s3.py

common_new

├── comparisons

│ ├── aws_s3_kinetica.py

│ ├── csv_kinetica.py

│ ├── __init__.py

│ └── utils.py

├── __init__.py

├── resources

│ ├── __init__.py

│ ├── resource_aws_s3.py

│ ├── resource_csv.py

│ └── resource_kinetica.py

└── utils.pyAppendix C: 3rd generation harness directory structure

regression_new_v2

├── comparisons

│ ├── _csv.py

│ ├── esri_shapefile.py

│ ├── __init__.py

│ ├── parquet_dataset.py

│ └── parquet.py

├── conftest.py

├── _data

│ ├── csv

│ │ ├── 1.csv

│ │ ├── 2010_Census_Populations_by_Zip_Code_truncated.csv

│ │ ├── Consumer_Complaints_truncated.csv

│ │ ├── Consumer_Complaints_truncated_no_headers.csv

│ │ ├── Demographic_Statistics_By_Zip_Code_truncated.csv

│ │ ├── demos.cleaned.flights_first_25_with_ordering.csv

│ │ ├── demos.cleaned.movies_first_25_with_ordering.csv

│ │ ├── demos.cleaned.nyctaxi_first_25_with_ordering.csv

│ │ ├── demos.cleaned.shipping_first_25_with_ordering.csv

│ │ ├── demos.cleaned.stocks_first_25_with_ordering.csv

│ │ ├── deniro.csv

│ │ ├── deniro_no_headers.csv

│ │ ├── FL_insurance_sample_truncated.csv

│ │ ├── __init__.py

│ │ └── Most_Recent_Cohorts_Scorecard_Elements_truncated.csv

│ ├── gis

│ │ ├── __init__.py

│ │ ├── ne_110m_admin_0_countries

│ │ │ ├── __init__.py

│ │ │ ├── ne_110m_admin_0_countries.cpg

│ │ │ ├── ne_110m_admin_0_countries.dbf

│ │ │ ├── ne_110m_admin_0_countries.duplicate.dbf

│ │ │ ├── ne_110m_admin_0_countries.duplicate.shp

│ │ │ ├── ne_110m_admin_0_countries.duplicate.shx

│ │ │ ├── ne_110m_admin_0_countries.prj

│ │ │ ├── ne_110m_admin_0_countries.shp

│ │ │ └── ne_110m_admin_0_countries.shx

│ │ └── ne_110m_coastline

│ │ ├── __init__.py

│ │ ├── ne_110m_coastline.cpg

│ │ ├── ne_110m_coastline.dbf

│ │ ├── ne_110m_coastline.prj

│ │ ├── ne_110m_coastline.shp

│ │ └── ne_110m_coastline.shx

│ ├── __init__.py

│ ├── parquet

│ │ ├── __init__.py

│ │ ├── KTOOL-331_2.parquet

│ │ ├── test_parquet_char128_basic.parquet

│ │ ├── test_parquet_char128_erroneous.parquet

│ │ ├── test_parquet_char128_null.parquet

│ │ ├── test_parquet_char16_basic.parquet

│ │ ├── test_parquet_char16_erroneous.parquet

│ │ ├── test_parquet_char16_null.parquet

│ │ ├── test_parquet_char1_basic.parquet

│ │ ├── test_parquet_char1_erroneous.parquet

│ │ ├── test_parquet_char1_null.parquet

│ │ ├── test_parquet_char256_basic.parquet

│ │ ├── test_parquet_char256_erroneous.parquet

│ │ ├── test_parquet_char256_null.parquet

│ │ ├── test_parquet_char2_basic.parquet

│ │ ├── test_parquet_char2_erroneous.parquet

│ │ ├── test_parquet_char2_null.parquet

│ │ ├── test_parquet_char32_basic.parquet

│ │ ├── test_parquet_char32_erroneous.parquet

│ │ ├── test_parquet_char32_null.parquet

│ │ ├── test_parquet_char4_basic.parquet

│ │ ├── test_parquet_char4_erroneous.parquet

│ │ ├── test_parquet_char4_null.parquet

│ │ ├── test_parquet_char64_basic.parquet

│ │ ├── test_parquet_char64_erroneous.parquet

│ │ ├── test_parquet_char64_null.parquet

│ │ ├── test_parquet_char8_basic.parquet

│ │ ├── test_parquet_char8_erroneous.parquet

│ │ ├── test_parquet_char8_null.parquet

│ │ ├── test_parquet_float_basic.parquet

│ │ ├── test_parquet_int_basic.parquet

│ │ ├── userdata1.parquet

│ │ ├── userdata2.parquet

│ │ ├── userdata3.parquet

│ │ ├── userdata4.parquet

│ │ └── userdata5.parquet

│ └── parquet_dataset

│ ├── example_dataset.zip

│ └── __init__.py

├── __init__.py

├── _logs

├── test_cases

│ ├── autogenerated_s3_parquet_dataset_to_kinetica_datasets_created_on_2018-09-06T14:17:19.297983.metadata.json

│ ├── aws_s3_csv_to_kinetica.metadata.json

│ ├── aws_s3_parquet_dataset_to_kinetica.metadata.json

│ ├── basic_csv_to_kinetica.metadata.json

│ ├── basic_kinetica_to_aws_s3_csv.metadata.json

│ ├── basic_kinetica_to_csv.metadata.json

│ ├── basic_kinetica_to_kinetica.metadata.json

│ ├── basic_kinetica_to_parquet.metadata.json

│ ├── basic_kinetica_to_postgresql.metadata.json

│ ├── basic_parquet_dataset_to_kinetica.metadata.json

│ ├── basic_parquet_to_kinetica.metadata.json

│ ├── basic_postgresql_to_kinetica.metadata.json

│ ├── basic_shapefile_to_basic_shapefile.metadata.json

│ ├── basic_shapefile_to_httpd_kinetica.metadata.json

│ ├── basic_shapefile_to_kinetica.metadata.json

│ ├── basic_shapefile_to_s3_shapefile.metadata.json

│ ├── chunks_parquet_to_kinetica.metadata.json

│ ├── chunks_s3_parquet_to_kinetica.metadata.json

│ ├── chunks_s3_shapefile_to_kinetica.metadata.json

│ ├── chunks_shapefile_to_kinetica.metadata.json

│ ├── __init__.py

│ ├── s3_parquet_to_kinetica.metadata.json

│ ├── s3_shapefile_to_basic_shapefile.metadata.json

│ └── s3_shapefile_to_kinetica.metadata.json

├── test_stub.py

├── _tmp

│ └── __init__.py

└── utils

├── args.py

├── __init__.py

├── utils.py

└── validation.pyAppendix D: 1st generation harness test results

(env) username@hostname:/path/to/kio$ pytest kio/tests/regression

===================================================== test session starts ======================================================

platform linux -- Python 3.6.4, pytest-3.4.1, py-1.5.2, pluggy-0.6.0

rootdir: /path/to/kio, inifile:

collected 98 items

kio/tests/regression/csv_to_kinetica/test_float.py . [ 1%]

kio/tests/regression/csv_to_kinetica/test_int.py . [ 2%]

kio/tests/regression/csv_to_kinetica/test_str.py . [ 3%]

kio/tests/regression/kinetica_to_csv/test_double.py . [ 4%]

kio/tests/regression/kinetica_to_csv/test_float.py . [ 5%]

kio/tests/regression/kinetica_to_csv/test_int.py . [ 6%]

kio/tests/regression/kinetica_to_csv/test_long.py . [ 7%]

kio/tests/regression/kinetica_to_csv/test_str.py . [ 8%]

kio/tests/regression/kinetica_to_kinetica/test_double.py . [ 9%]

kio/tests/regression/kinetica_to_kinetica/test_float.py . [ 10%]

kio/tests/regression/kinetica_to_kinetica/test_int.py . [ 11%]

kio/tests/regression/kinetica_to_kinetica/test_long.py . [ 12%]

kio/tests/regression/kinetica_to_kinetica/test_str.py . [ 13%]

kio/tests/regression/kinetica_to_parquet/test_char1.py . [ 14%]

kio/tests/regression/kinetica_to_parquet/test_char128.py . [ 15%]

kio/tests/regression/kinetica_to_parquet/test_char16.py . [ 16%]

kio/tests/regression/kinetica_to_parquet/test_char2.py . [ 17%]

kio/tests/regression/kinetica_to_parquet/test_char256.py . [ 18%]

kio/tests/regression/kinetica_to_parquet/test_char32.py . [ 19%]

kio/tests/regression/kinetica_to_parquet/test_char4.py . [ 20%]

kio/tests/regression/kinetica_to_parquet/test_char64.py . [ 21%]

kio/tests/regression/kinetica_to_parquet/test_char8.py . [ 22%]

kio/tests/regression/kinetica_to_parquet/test_float.py . [ 23%]

kio/tests/regression/kinetica_to_parquet/test_int.py . [ 24%]

kio/tests/regression/kinetica_to_postgresql/test_char1.py . [ 25%]

kio/tests/regression/kinetica_to_postgresql/test_char128.py . [ 26%]

kio/tests/regression/kinetica_to_postgresql/test_char16.py . [ 27%]

kio/tests/regression/kinetica_to_postgresql/test_char2.py . [ 28%]

kio/tests/regression/kinetica_to_postgresql/test_char256.py . [ 29%]

kio/tests/regression/kinetica_to_postgresql/test_char32.py . [ 30%]

kio/tests/regression/kinetica_to_postgresql/test_char4.py . [ 31%]

kio/tests/regression/kinetica_to_postgresql/test_char64.py . [ 32%]

kio/tests/regression/kinetica_to_postgresql/test_char8.py . [ 33%]

kio/tests/regression/kinetica_to_postgresql/test_date.py . [ 34%]

kio/tests/regression/kinetica_to_postgresql/test_datetime.py . [ 35%]

kio/tests/regression/kinetica_to_postgresql/test_int.py . [ 36%]

kio/tests/regression/kinetica_to_postgresql/test_int16.py . [ 37%]

kio/tests/regression/kinetica_to_postgresql/test_int8.py . [ 38%]

kio/tests/regression/kinetica_to_postgresql/test_misc.py . [ 39%]

kio/tests/regression/kinetica_to_postgresql/test_str.py . [ 40%]

kio/tests/regression/kinetica_to_postgresql/test_time.py . [ 41%]

kio/tests/regression/parquet_to_kinetica/test_char1.py ... [ 44%]

kio/tests/regression/parquet_to_kinetica/test_char128.py ... [ 47%]

kio/tests/regression/parquet_to_kinetica/test_char16.py ... [ 51%]

kio/tests/regression/parquet_to_kinetica/test_char2.py ... [ 54%]

kio/tests/regression/parquet_to_kinetica/test_char256.py ... [ 57%]

kio/tests/regression/parquet_to_kinetica/test_char32.py ... [ 60%]

kio/tests/regression/parquet_to_kinetica/test_char4.py ... [ 63%]

kio/tests/regression/parquet_to_kinetica/test_char64.py ... [ 66%]

kio/tests/regression/parquet_to_kinetica/test_char8.py ... [ 69%]

kio/tests/regression/parquet_to_kinetica/test_float.py . [ 70%]

kio/tests/regression/parquet_to_kinetica/test_int.py . [ 71%]

kio/tests/regression/postgresql_to_kinetica/test_char1.py ... [ 74%]

kio/tests/regression/postgresql_to_kinetica/test_char128.py ... [ 77%]

kio/tests/regression/postgresql_to_kinetica/test_char16.py ... [ 80%]

kio/tests/regression/postgresql_to_kinetica/test_char2.py ... [ 83%]

kio/tests/regression/postgresql_to_kinetica/test_char256.py ... [ 86%]

kio/tests/regression/postgresql_to_kinetica/test_char32.py ... [ 89%]

kio/tests/regression/postgresql_to_kinetica/test_char4.py ... [ 92%]

kio/tests/regression/postgresql_to_kinetica/test_char64.py ... [ 95%]

kio/tests/regression/postgresql_to_kinetica/test_char8.py ... [ 98%]

kio/tests/regression/postgresql_to_kinetica/test_int.py . [100%]

================================================== 98 passed in 14.62 seconds ==================================================Appendix E: 2nd generation harness test results

(env) username@hostname:/path/to/kio$ pytest -q kio/tests/regression_new/ -s

2018-07-10 13:28:01,892 - test_aws_s3_to_kinetica.py - INFO - BEGIN TEST CASES

2018-07-10 13:28:02,664 - test_aws_s3_to_kinetica.py - INFO - Test case "BASE CASE 1" executed successfully.

2018-07-10 13:28:04,288 - test_aws_s3_to_kinetica.py - INFO - Test case "DUPLICATE FILES 1" executed successfully.

2018-07-10 13:28:04,855 - test_aws_s3_to_kinetica.py - INFO - Test case "2018.06.14.0 AWS Credentials KIO CLI" executed successfully.

2018-07-10 13:28:04,875 - test_aws_s3_to_kinetica.py - INFO - END TEST CASES

.2018-07-10 13:28:04,875 - test_csv_to_kinetica.py - INFO - BEGIN TEST CASES

2018-07-10 13:28:04,996 - test_csv_to_kinetica.py - INFO - Test case "BASE CASE 1" executed successfully.

2018-07-10 13:28:05,023 - test_csv_to_kinetica.py - INFO - END TEST CASES

.2018-07-10 13:28:05,024 - test_kinetica_to_aws_s3.py - INFO - BEGIN TEST CASES

2018-07-10 13:28:05,584 - test_kinetica_to_aws_s3.py - INFO - Test case "BASE CASE 1" executed successfully.

2018-07-10 13:28:06,220 - test_kinetica_to_aws_s3.py - INFO - Test case "2018.06.14.0 AWS Credentials KIO CLI" executed successfully.

2018-07-10 13:28:06,288 - test_kinetica_to_aws_s3.py - INFO - END TEST CASES

.

3 passed in 5.14 secondsAppendix F: 3rd generation harness test results

(env) username@hostname:/path/to/kio$ pytest -q kio/tests/regression_new_v2/

..................... [100%]

21 passed in 14.59 seconds